About

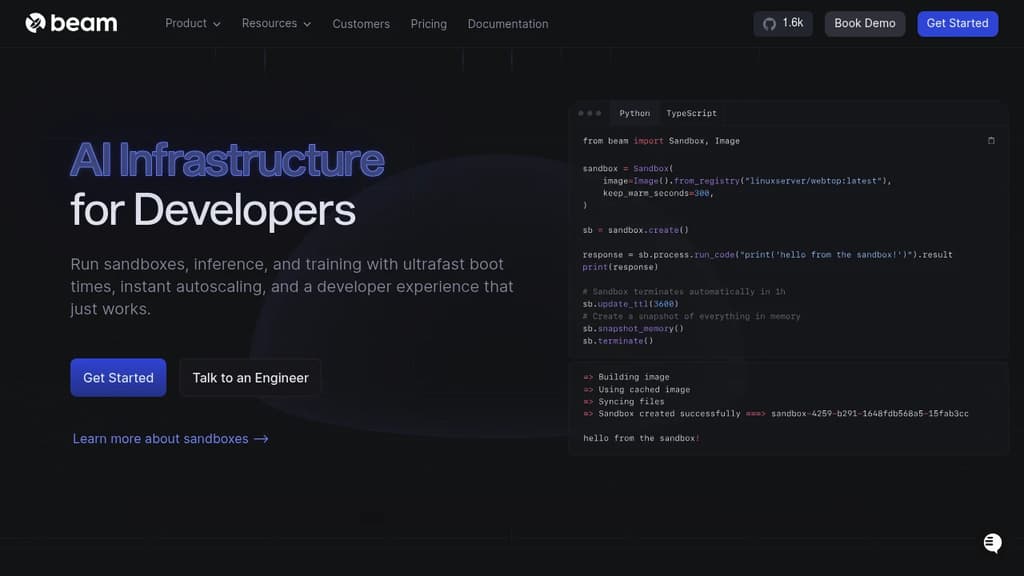

Revolutionary AI infrastructure designed specifically for developers who need speed, reliability, and seamless scaling. Run sandboxes, inference, and training workloads with ultrafast boot times and instant autoscaling that adapts to your traffic patterns. Key capabilities include: The platform supports multiple use cases from custom model inference and LLM training to web scraping and Streamlit apps. 100% open source with the flexibility to run on their cloud or yours. Developer-first experience features easy local debugging, one-line hardware switching, and seamless CI/CD integration. Trusted by leading AI companies for its exceptional developer experience and reliability, helping teams ship faster without the complexity of managing GPU infrastructure.